floyoofficial

19.0k

Marketing

Photography

Production

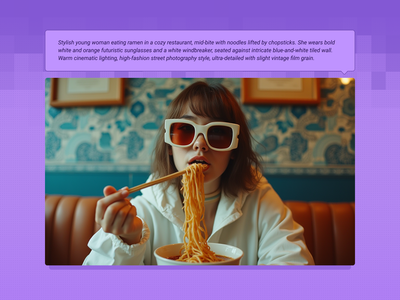

Text2Image

Z-Image Turbo

Fast Image Generation in Seconds

Z-Image Turbo: Fast Image Generation in Seconds

Fast Image Generation in Seconds

floyoofficial

5.1k

Animation

Filmmaking

First and last frame

Game Development

Image to Video

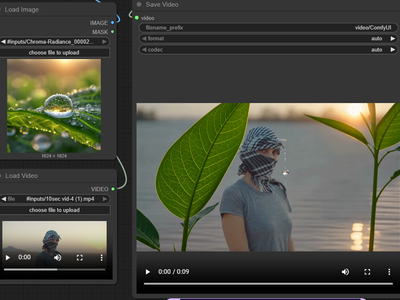

Wan2.2

Generate high quality video from a start frame, as well as an optional end frame with this Wan2.2 14b Image to Video workflow!

Wan2.2 14b - Image to Video w/ Optional Last Frame

Generate high quality video from a start frame, as well as an optional end frame with this Wan2.2 14b Image to Video workflow!

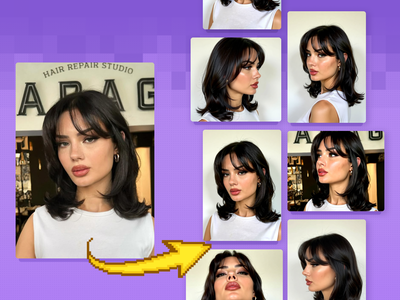

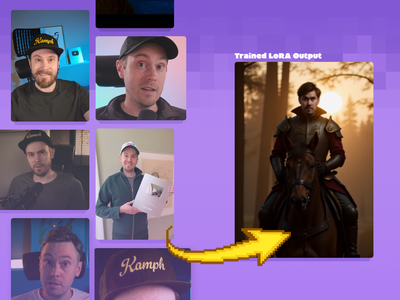

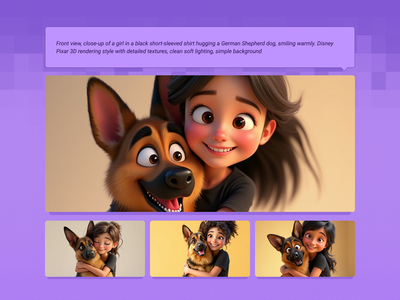

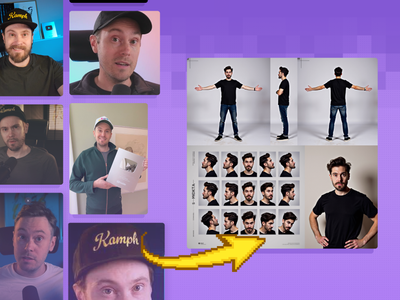

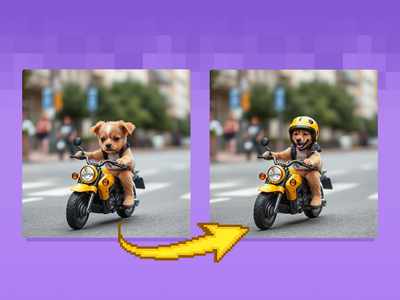

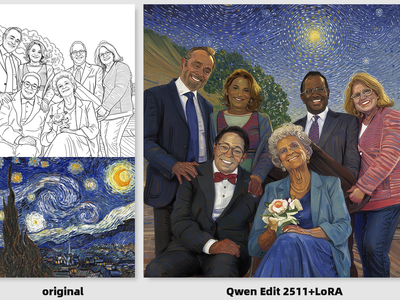

Qwen Image Edit 2509 for LoRA Dataset

Create Character LoRA Dataset

floyoofficial

13.2k

API

Floyo API

Image2Image

Nano Banana Pro

Google just released Nano Banana Pro, and honestly, it's a pretty big step up from the original Nano Banana. The main thing? It can actually put legible text in images now. Like, real text that you can read, not the garbled nonsense most AI models spit out.

Nano Banana Pro Text-to-Image: Gemini 3 Pro

Google just released Nano Banana Pro, and honestly, it's a pretty big step up from the original Nano Banana. The main thing? It can actually put legible text in images now. Like, real text that you can read, not the garbled nonsense most AI models spit out.

floyoofficial

3.9k

Image to Video

Wan

Created by @vrgamedevgirl on Civitai, please support the original creator!

Wan2.1 FusionX Image2Video

Created by @vrgamedevgirl on Civitai, please support the original creator!

floyoofficial

3.9k

API

Flux

LoRa Training

FLUX is great at generating images, but locking in a specific aesthetic or character is easier with a LoRA. Here's how to create your own.

Fast LoRA Training for Flux via Floyo API

FLUX is great at generating images, but locking in a specific aesthetic or character is easier with a LoRA. Here's how to create your own.

Wan 2.6 Reference to Video

floyoofficial

4.8k

Animation

Filmography

Grok

Image2Video

Turn images into excellent video using the Grok Imagine

Grok Imagine for Image to Video

Turn images into excellent video using the Grok Imagine

SeedVR2 Upscale: Upscale to Extreme Clarity

Upscale to Extreme Clarity

360

Image2Video

Wan2.1

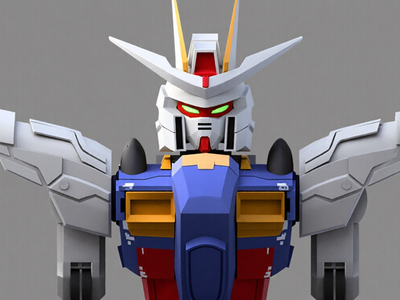

See an image of a character spin 360 degrees. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly Width & height: Default resolution settings are noted: Default image resize resolution works best for portrait images, if the image is landscape change from 480x832 to 832x480 Prompt: Follow example format: The video shows (describe the subject), performs a r0t4tion 360 degrees rotation. Denoise: The amount of variance in the new image. Higher has more variance. File Format: H.264 and more

Image to Character Spin

See an image of a character spin 360 degrees. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly Width & height: Default resolution settings are noted: Default image resize resolution works best for portrait images, if the image is landscape change from 480x832 to 832x480 Prompt: Follow example format: The video shows (describe the subject), performs a r0t4tion 360 degrees rotation. Denoise: The amount of variance in the new image. Higher has more variance. File Format: H.264 and more

Wan2.2 Animate Character

Wan 2.2

floyoofficial

2.3k

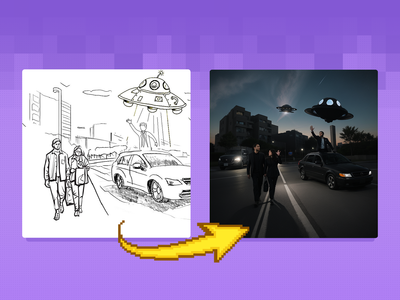

Flux Kontext

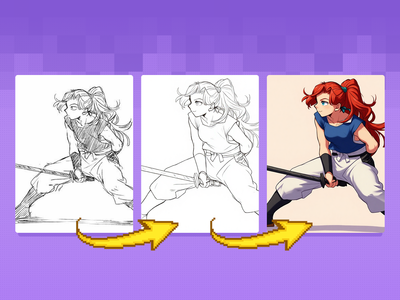

Lineart

Previz

Sketch to Image

Quickly convert rough sketches into polished lineart and colorized concepts. Ideal for early storyboards, character designs, scene planning, and other visual explorations.

Flux Kontext Sketch to LineArt + Color Previz

Quickly convert rough sketches into polished lineart and colorized concepts. Ideal for early storyboards, character designs, scene planning, and other visual explorations.

floyoofficial

1.3k

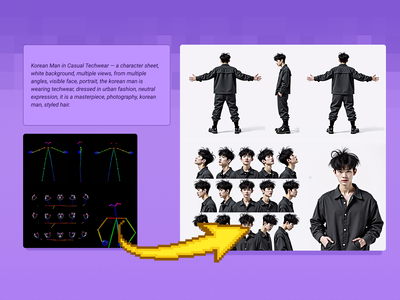

Character Sheet

Controlnet

Flux

Create a character and a range of consistent outputs suitable for establishing character consistency, training a model, and ensuring consistency throughout multiple scenes. Key Inputs Image reference: Use the included pose sheet to show range of positions Prompt: as descriptive a prompt as possible

Flux Text to Character Sheet

Create a character and a range of consistent outputs suitable for establishing character consistency, training a model, and ensuring consistency throughout multiple scenes. Key Inputs Image reference: Use the included pose sheet to show range of positions Prompt: as descriptive a prompt as possible

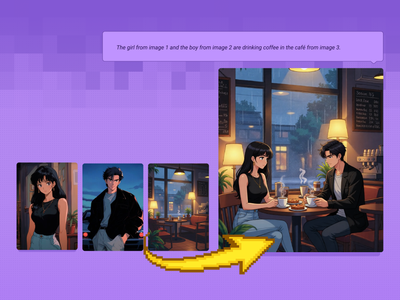

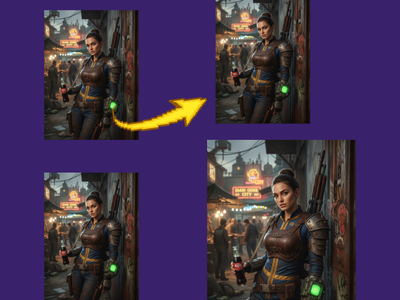

Qwen Image Edit 2509: Combine Multiple Images Into One Scene for Fashion, Products, Poses & more

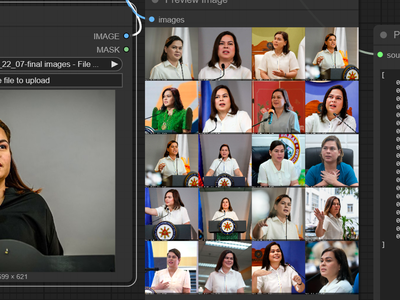

Qwen Image Edit 2509 Face Swap and Inpainting

Face Swap and Inpainting

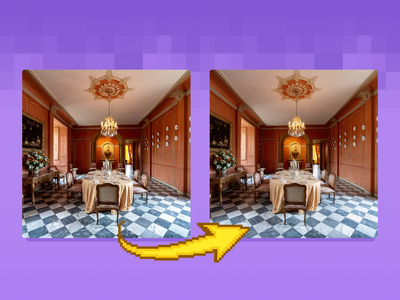

Z-Image Turbo Controlnet 2.1 Image to Image

Image to Image

floyoofficial

4.7k

Filmmaking

LTX 2

LTX 2 Fast

Open Source

Text2Video

Videography

A text video model using LTX 2

LTX 2 19B Fast for Text to Video

A text video model using LTX 2

floyoofficial

2.0k

Animation

Image to Video

Kling 2.6

Create an excellent for movement for your characters using Kling 2.6 Standard Motion Control

Kling 2.6 Standard Motion Control

Create an excellent for movement for your characters using Kling 2.6 Standard Motion Control

LTX 2.3 Pro Image to Video

LTX 2.3

Qwen Image Edit - Edit Image Easily

floyoofficial

3.3k

Outpainting

Video to Video

Wan

Wan VACE video outpainting invites you to break free from the limits of the frame and explore endless creative possibilities.

Wan2.1 and VACE for Video to Video Outpainting

Wan VACE video outpainting invites you to break free from the limits of the frame and explore endless creative possibilities.

ComfyUI Flux LoRA Trainer

Created by @Kijai on Github, please support the original creator!

Flux Dev - Text to Image w/ Optional Image Input

floyoofficial

1.4k

Animation

Filmmaking

Image2Video

LTX 2 Pro

Video Editing

Image to Video using LTX 2 Pro API

LTX 2 Pro API for Image to Video

Image to Video using LTX 2 Pro API

API

Flux

Text to Image

Start with a prompt, and get a different render from a range of unique models at the same time.

Multi-Image Flux Ultra, Pro, Dev, Recraft+

Start with a prompt, and get a different render from a range of unique models at the same time.

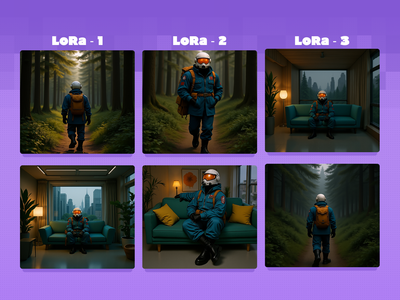

Animation

Filmmaking

Flux

Game Development

LoRA

Text to Image

Test and compare multiple epochs of a character LoRA side by side with preset prompts When training a LoRA, you'll usually have a few checkpoints throughout the process to test. This workflow lets you load up to 4 LoRAs to test side by side, making it easier to determine which one is right for you! Key Inputs: LoRA Loaders: Load each LoRA epoch for the same character in up to 4 groups. Groups Bypasser: Enable/disable groups as needed. If you only have 2 epochs to test, disable the back 2 groups! Triggerword: Simply add the trigger word for your LoRA and it will auto-fill in the default prompts. Leave blank if you're using your own custom prompts that include the trigger word. LoRA Testing Prompts: Default prompts work well to get an idea of how your character will look in different situations, but feel free to replace them with your own prompts (max 4).

Flux Character LoRA Test and Compare

Test and compare multiple epochs of a character LoRA side by side with preset prompts When training a LoRA, you'll usually have a few checkpoints throughout the process to test. This workflow lets you load up to 4 LoRAs to test side by side, making it easier to determine which one is right for you! Key Inputs: LoRA Loaders: Load each LoRA epoch for the same character in up to 4 groups. Groups Bypasser: Enable/disable groups as needed. If you only have 2 epochs to test, disable the back 2 groups! Triggerword: Simply add the trigger word for your LoRA and it will auto-fill in the default prompts. Leave blank if you're using your own custom prompts that include the trigger word. LoRA Testing Prompts: Default prompts work well to get an idea of how your character will look in different situations, but feel free to replace them with your own prompts (max 4).

floyoofficial

3.1k

API

Image2Image

Nano Banana

Nano Banana 2

Text2Image

The top-ranked image model on Artificial Analysis and LM Arena. 4K output, text rendering, and subject consistency across 5 characters.

Nano Banana 2 - Google's #1 ranked image model

The top-ranked image model on Artificial Analysis and LM Arena. 4K output, text rendering, and subject consistency across 5 characters.

floyoofficial

1.4k

Flux

Kontext

Sketch to Image

Bring your sketches to life in full color with Flux Kontext! Key Inputs Load Image – Upload the sketch you want to transform. Prompt – Describe the desired output style, such as: “Render this sketch as a realistic photo” or “Turn this sketch into a watercolor painting.”

Flux Kontext - Sketch to Image

Bring your sketches to life in full color with Flux Kontext! Key Inputs Load Image – Upload the sketch you want to transform. Prompt – Describe the desired output style, such as: “Render this sketch as a realistic photo” or “Turn this sketch into a watercolor painting.”

floyoofficial

1.2k

ace

face

face swap

faceswap

face swapper

sebastian kamph

swap

Face swapper built with Flux and ACE++. Works with added details too, like hats, jewelry. Smart features, use natural language.

SMART FACE SWAPPER - Ace++ Flux Face swap

Face swapper built with Flux and ACE++. Works with added details too, like hats, jewelry. Smart features, use natural language.

floyoofficial

3.1k

Controlnet

Flux

Video2Video

Wan2.1

Create a new video by restyling an existing video with a reference image.

Wan2.1 Fun Control and Flux for V2V Restyle

Create a new video by restyling an existing video with a reference image.

floyoofficial

1.1k

Flux

Flux Kontext

Image2Image

kontext

panorama

Flux Kontext 360° Workflow - Seamless Panorama Generation Input: Simply upload an image in the "Load Image from Outputs" node Output: A 360° Panoramic image

Flux Kontext and HD360 LoRA for 360 Degree View

Flux Kontext 360° Workflow - Seamless Panorama Generation Input: Simply upload an image in the "Load Image from Outputs" node Output: A 360° Panoramic image

Wan2.6 Image to Video

Image to Video

floyoofficial

1.4k

Image2Video

Start and end frame

Wan2.1

Used for image to video generation, defined by the first frame and end frame images.

Wan2.1 Start & End Frame Image to Video

Used for image to video generation, defined by the first frame and end frame images.

Video to Video with Camera Control with Wan

Adjust the camera angle of an existing video, like magic.

Character + Outfit → High-End Editorial Shoot

API

Floyo API

Image to Video

Seedance 1.5 Pro

Draft mode lets you first experiment at a low cost by generating 480p draft videos

Seedance 1.5 Pro with Draft Mode

Draft mode lets you first experiment at a low cost by generating 480p draft videos

floyoofficial

1.1k

Flux

Text2Image

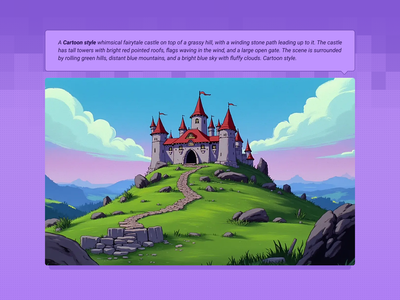

Create original images using only text prompts, which can be simple or elaborate. Key Inputs Prompt: as descriptive a prompt as possible Width & height: Optimal resolution settings are noted

Flux Text to Image

Create original images using only text prompts, which can be simple or elaborate. Key Inputs Prompt: as descriptive a prompt as possible Width & height: Optimal resolution settings are noted

floyoofficial

4.0k

Text2Image

Z-Image

Z-image-base

Create sunning images using z-image base model (non distlled).

Z-Image Base for Text to Image

Create sunning images using z-image base model (non distlled).

floyoofficial

1.6k

Recammaster

Video to Video

Wan

Adjust the camera angle of an existing video, like magic.

Wan2.1 and RecamMaster for V2V Camera Control

Adjust the camera angle of an existing video, like magic.

360° Character Turnaround & Sheet Workflow

Image-to-Video with Reference Video (Prompt-Based Camera Rotation)

FlashVSR Upscale Your Videos Instantly

floyoofficial

2.8k

Flux

Flux.2 Klein

Image2image

Unified workflow: one model for text‑to‑image, image‑to‑image, and image editing

FLUX.2 Klein 9B for Image Editing

Unified workflow: one model for text‑to‑image, image‑to‑image, and image editing

floyoofficial

2.4k

Animation

Filmmaking

Image to Video

Lipsync

Marketing

Multitalk

Wan2.1

Turn any portrait - artwork, photos, or digital characters - into speaking, expressive videos that sync perfectly with audio input. MultiTalk handles lip movements, facial expressions, and body motion automatically.

Wan2.1 FusionX and MultiTalk - Image to Video

Turn any portrait - artwork, photos, or digital characters - into speaking, expressive videos that sync perfectly with audio input. MultiTalk handles lip movements, facial expressions, and body motion automatically.

VibeVoice Text to Speech Single Speaker

VibeVoice

Controlnet

Flux

Image

Transform your images into something completely new, yet retaining specific details and composition from your original using flexible controls. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly Prompt: as descriptive a prompt as possible Denoise Strength: The amount of variance in the new image. Higher has more variance. Width & height: Try and match the aspect ratio of the original if possible.

Image to Image with Flux ControlNet

Transform your images into something completely new, yet retaining specific details and composition from your original using flexible controls. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly Prompt: as descriptive a prompt as possible Denoise Strength: The amount of variance in the new image. Higher has more variance. Width & height: Try and match the aspect ratio of the original if possible.

Flux

Image

UltimateSD

Upscale

A simple workflow to enlarge & add detail to an existing image. Key Inputs Image: Use any JPG or PNG Upscale by: The factor of magnification Denoise: The amount of variance in the new image. Higher has more variance.

Flux Image Upscaler with UltimateSD

A simple workflow to enlarge & add detail to an existing image. Key Inputs Image: Use any JPG or PNG Upscale by: The factor of magnification Denoise: The amount of variance in the new image. Higher has more variance.

VEO3 Future of Video Creation

Flux 2 Text-to-Image Generation

floyoofficial

1.2k

Clothes Swap

Flux

Flux.2 Klein

Image Editing

LanPaint

Replace clothes using the Flux.2 Klein 4B

FLUX.2 Klein 4B and LanPaint for Swap Clothes

Replace clothes using the Flux.2 Klein 4B

Z-Image Turbo + DyPE + SeedVR2 2.5 + TTP 16k reso

floyoofficial

1.0k

Ace+

Fashion

Flux

Image to Image

Virtual Try-on

Virtual Outfit Try-On with Auto Segmentation Try virtual clothing on any subject using Flux Dev, Ace Plus, and Redux, with automatic segmentation. Great for concept previews, fashion mockups, or character styling. Key Inputs Outfit: Load the outfit image you want to apply. Make sure it's high quality — visible artifacts or distortions may carry over into the final result. Actor: Add the subject or character you want to dress. Ideally, use a clear, front-facing image. Human Parts Ultra: Choose which parts of the body the clothing should apply to. For example, for a long-sleeve shirt, select: torso, left arm, and right arm. This helps the model align the clothing properly during generation. Prompt: Default value works for most outfits, however you may try to adjust it to describe the desired outfit.

Flux Outfit Transfer

Virtual Outfit Try-On with Auto Segmentation Try virtual clothing on any subject using Flux Dev, Ace Plus, and Redux, with automatic segmentation. Great for concept previews, fashion mockups, or character styling. Key Inputs Outfit: Load the outfit image you want to apply. Make sure it's high quality — visible artifacts or distortions may carry over into the final result. Actor: Add the subject or character you want to dress. Ideally, use a clear, front-facing image. Human Parts Ultra: Choose which parts of the body the clothing should apply to. For example, for a long-sleeve shirt, select: torso, left arm, and right arm. This helps the model align the clothing properly during generation. Prompt: Default value works for most outfits, however you may try to adjust it to describe the desired outfit.

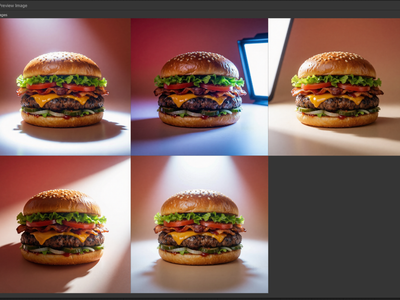

API

Ecommerce

Image2Image

Nano Banana Pro

Product Ads

Create grids of different angles for your ecommerce products.

Nano Banana Pro for Multi Grid View of Product Ads

Create grids of different angles for your ecommerce products.

Create Images Using Qwen Image Edit 2511

Qwen Image edit 2511

floyoofficial

3.0k

Image2Image

Image Editing

Seedream 5.0

Text2Image

ByteDance's latest image model. Text-to-image, image editing, and multi-reference composition in one workflow.

Seedream 5.0 Lite Unified for Image Generation

ByteDance's latest image model. Text-to-image, image editing, and multi-reference composition in one workflow.

Text to Image with Multi-LoRA

Create consistent images with multiple LoRA models.

Multi-Angle LoRA and Qwen Image Edit 2509

Kling 3.0 Pro for Image to Video

Turn images into a video using Kling 3.0 Pro

floyoofficial

2.7k

seedance

seedance 2.0

text to video

video generation

Generate up to 15-second videos with native audio from a text prompt using ByteDance's Seedance 2.0. Pick your aspect ratio, resolution, and duration.

Seedance 2.0 - Text to Video

Generate up to 15-second videos with native audio from a text prompt using ByteDance's Seedance 2.0. Pick your aspect ratio, resolution, and duration.

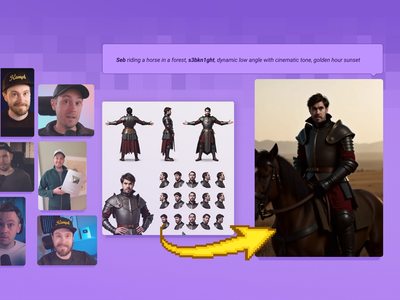

Character Sheet

Controlnet

Flux

Generate a character sheet using a prompt and a LoRA model of a particular person for more accurate renders. Key Inputs Load Image: Use any JPG or PNG of your pose sheet Prompt: as descriptive a prompt as possible Width & height: Optimal resolution settings are noted at 1280px x 1280px Denoise: The amount of variance in the new image. Higher has more variance. ControlNet Strength: The amount of adherence to the original image. Higher has more adherence. Start Percent: The point in the generation process where the control starts exerting influence. (Have it start later, to let AI imagine first.) End Percent: The point in the generation process where the control stops exerting influence. (Have it end sooner, to let AI finish it off with some variation.) Flux Guidance: How much influence the prompt has over the image. Higher has more guidance.

Text to Character Sheet with a reference LoRA

Generate a character sheet using a prompt and a LoRA model of a particular person for more accurate renders. Key Inputs Load Image: Use any JPG or PNG of your pose sheet Prompt: as descriptive a prompt as possible Width & height: Optimal resolution settings are noted at 1280px x 1280px Denoise: The amount of variance in the new image. Higher has more variance. ControlNet Strength: The amount of adherence to the original image. Higher has more adherence. Start Percent: The point in the generation process where the control starts exerting influence. (Have it start later, to let AI imagine first.) End Percent: The point in the generation process where the control stops exerting influence. (Have it end sooner, to let AI finish it off with some variation.) Flux Guidance: How much influence the prompt has over the image. Higher has more guidance.

floyoofficial

3.0k

FLUX

FLUX.2 Klein

Image2Image

LoRA

Create realistic image but in an enhanced details using FLUX.2 Klein 9B and with LoRA

FLUX.2 Klein 9B + Realistic Enhanced Details LoRA

Create realistic image but in an enhanced details using FLUX.2 Klein 9B and with LoRA

floyoofficial

1.2k

API

Hunyuan

LORA Training

Hunyuan is great at generating videos, but locking in a specific aesthetic or character is easier with a LoRA.

LoRA Training Video with Hunyuan

Hunyuan is great at generating videos, but locking in a specific aesthetic or character is easier with a LoRA.

Flux

LoRa

Text2Image

Create an image from a trained AI model of something specific ( a specific figure, outfit, art style, product etc) to ensure specific details within.

Text to Image + LoRA model

Create an image from a trained AI model of something specific ( a specific figure, outfit, art style, product etc) to ensure specific details within.

AnimateDiff

Control Image

HotshotXL

SDXL

Video2Video

Breathe life into a character from an image reference using motion reference from a video. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly and the style of your shot Load Video: Use any Mp4 that you would like to use for motion reference

Video to Video with Control Image

Breathe life into a character from an image reference using motion reference from a video. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly and the style of your shot Load Video: Use any Mp4 that you would like to use for motion reference

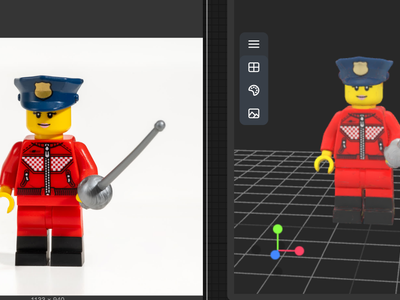

3D View

Animation

Architecture

Filmmaking

Game Development

Hunyuan 3D

Hunyuan3D

Image to 3D

A simple workflow to create a detailed & textured 3D model from a reference image.

Image to 3D with Hunyuan3D

A simple workflow to create a detailed & textured 3D model from a reference image.

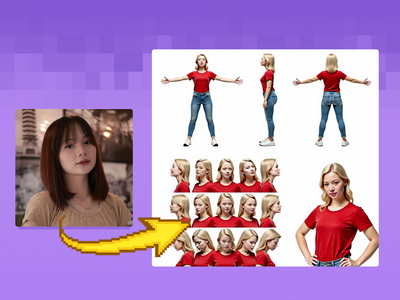

Character Sheet

Image to Image

SDXL

Generate a character sheet with multiple angles from a single input image as reference. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly. If you're trying to create a full body output, a full body input must be provided.

Image to Character Sheet

Generate a character sheet with multiple angles from a single input image as reference. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly. If you're trying to create a full body output, a full body input must be provided.

Flux.2 Klein Image Expansion / Outpaint

Qwen Image 2512 Text to Image

Text to image

Qwen Image Edit 2511 Restore Damage Old Photograph

Restore Damage Old Photograph

Kling Omni One Video to Video Edit

floyoofficial

1.5k

API

Controlnet

Floyo API

Image2Image

LoRA

Z-Image Turbo

Creating Accurate Variety of Images

Z-Image Turbo with Controlnet 2.1 and Qwen VLM

Creating Accurate Variety of Images

DyPe and Z-Image Turbo for High Quality Text to Image

SeedVR2 and TTP Toolset 8k Image Upscale

Wan Alpha Create Transparent Videos

Veo 3.1 Image to Video - First Frame and Optional Last Frame

Seedance I2V: Image to Video in Minutes

AniSora 3.2 and Wan2.2: Best Practices for Generating Smooth Character 3D Spin

SeC Video Segmentation: Unleashing Adaptive, Semantic Object Tracking

HunyuanVideo Foley: Create a Lifelike Sound

Start/End Frame Multi-Video via Floyo API

Compare between Luma Dream Machine and Kling Pro 1.6 via Fal API

Flux

Image

Inpaint

Change specific details on just a portion of the image, sometimes known as inpainting or Erase & Replace. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly Masking tools: Right-click to reveal the masking tool option, and create a mask of the desired area to inpaint Prompt: as descriptive a prompt as possible to help guide what you would like replaced in the masked area

Image Inpainting

Change specific details on just a portion of the image, sometimes known as inpainting or Erase & Replace. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly Masking tools: Right-click to reveal the masking tool option, and create a mask of the desired area to inpaint Prompt: as descriptive a prompt as possible to help guide what you would like replaced in the masked area

floyoofficial

1.6k

Image

Inpaint

LoRa

Change specific details on just a portion of the image for inpainting or Erase & Replace, adding a LoRA for extra control.

Image Inpainting with LoRA

Change specific details on just a portion of the image for inpainting or Erase & Replace, adding a LoRA for extra control.

floyoofficial

1.6k

MMaudio

Video to Video

Generate synchronized audio with a given video input. It can be combined with video models to get videos with audio.

MMAudio: Video to Synced Audio

Generate synchronized audio with a given video input. It can be combined with video models to get videos with audio.

Controlnet

SD1.5

Turn your scribbles into a beautiful image with only a drawing tool and a text prompt. Key Inputs Scribble: Create your scribble with the painting and design tools Prompt: as descriptive a prompt as possible Width & height: Optimal resolution settings are noted ControlNet Strength: The amount of adherence to the original image. Higher has more adherence. Start Percent: The point in the generation process where the control starts exerting influence. (Have it start later, to let AI imagine first.) End Percent: The point in the generation process where the control stops exerting influence. (Have it end sooner, to let AI finish it off with some variation.)

Scribble to Image

Turn your scribbles into a beautiful image with only a drawing tool and a text prompt. Key Inputs Scribble: Create your scribble with the painting and design tools Prompt: as descriptive a prompt as possible Width & height: Optimal resolution settings are noted ControlNet Strength: The amount of adherence to the original image. Higher has more adherence. Start Percent: The point in the generation process where the control starts exerting influence. (Have it start later, to let AI imagine first.) End Percent: The point in the generation process where the control stops exerting influence. (Have it end sooner, to let AI finish it off with some variation.)

Wan2.1 InfiniteTalk Video to Video

Controlnet

SD1.5

Turn your sketches into full blown scenes. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly Prompt: as descriptive a prompt as possible Width & height: In pixels ControlNet Strength: The amount of adherence to the original image. Higher has more adherence. Start Percent: The point in the generation process where the control starts exerting influence. (Have it start later, to let AI imagine first.) End Percent: The point in the generation process where the control stops exerting influence. (Have it end sooner, to let AI finish it off with some variation.)

Sketch to Image

Turn your sketches into full blown scenes. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly Prompt: as descriptive a prompt as possible Width & height: In pixels ControlNet Strength: The amount of adherence to the original image. Higher has more adherence. Start Percent: The point in the generation process where the control starts exerting influence. (Have it start later, to let AI imagine first.) End Percent: The point in the generation process where the control stops exerting influence. (Have it end sooner, to let AI finish it off with some variation.)

Kling Omni One Image to Video

Clothing & Accessories Replacement

Animation

Filmmaking

Image2Video

Kling 2.6 Pro

Create stunning videos using Kling 2.6 Pro

Kling 2.6 Pro for Image to Video

Create stunning videos using Kling 2.6 Pro

Chatterbox Text to Speech

Text to speech workflow using Chatterbox

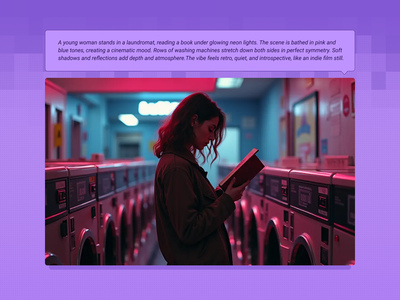

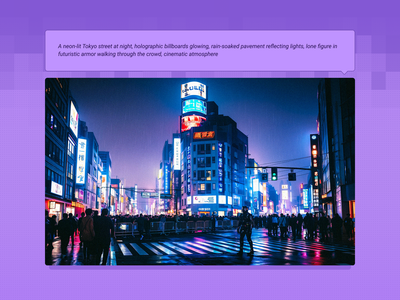

text2image

Wan2.1

Created by @yanokusnir on Reddit, please support the original creator! https://www.reddit.com/r/StableDiffusion/comments/1lu7nxx/wan_21_txt2img_is_amazing/ If this is your workflow, please contact us at team@floyo.ai to claim it! Original post from the creator: Hello. This may not be news to some of you, but Wan 2.1 can generate beautiful cinematic images. I was wondering how Wan would work if I generated only one frame, so to use it as a txt2img model. I am honestly shocked by the results. All the attached images were generated in fullHD (1920x1080px) and on my RTX 4080 graphics card (16GB VRAM) it took about 42s per image. I used the GGUF model Q5_K_S, but I also tried Q3_K_S and the quality was still great. The only postprocessing I did was adding film grain. It adds the right vibe to the images and it wouldn't be as good without it. Last thing: For the first 5 images I used sampler euler with beta scheluder - the images are beautiful with vibrant colors. For the last three I used ddim_uniform as the scheluder and as you can see they are different, but I like the look even though it is not as striking. :) Enjoy.

Wan 2.1 Text2Image

Created by @yanokusnir on Reddit, please support the original creator! https://www.reddit.com/r/StableDiffusion/comments/1lu7nxx/wan_21_txt2img_is_amazing/ If this is your workflow, please contact us at team@floyo.ai to claim it! Original post from the creator: Hello. This may not be news to some of you, but Wan 2.1 can generate beautiful cinematic images. I was wondering how Wan would work if I generated only one frame, so to use it as a txt2img model. I am honestly shocked by the results. All the attached images were generated in fullHD (1920x1080px) and on my RTX 4080 graphics card (16GB VRAM) it took about 42s per image. I used the GGUF model Q5_K_S, but I also tried Q3_K_S and the quality was still great. The only postprocessing I did was adding film grain. It adds the right vibe to the images and it wouldn't be as good without it. Last thing: For the first 5 images I used sampler euler with beta scheluder - the images are beautiful with vibrant colors. For the last three I used ddim_uniform as the scheluder and as you can see they are different, but I like the look even though it is not as striking. :) Enjoy.

character replacement

character swap

image to video

masking

Points Editor

vertical video

Wan2.2 Animate

WanAnimateToVideo

Vertical Video Character Face & Actor Swap (Wan 2.2 Animate)

character design

consistency

film production

image to video

video generation

wan

Wan 2.7 Reference to Video with Motion Control

Wan 2.7 Reference to Video with Motion Control

Wan 2.7 Reference to Video with Motion Control

Wan2.2 and Bullet Time LoRA: Transform Static Shots into Product Spins

Character Sheet

Face Swap

Flux

Image

Take a character sheet and use a reference image to replace all the faces with that new person. Key Inputs Load Image: Use any JPG or PNG showing your pose sheet Load New Face: Use any JPG or PNG showing your subject clearly that you would like to swap into the pose sheet. Prompt: as descriptive a prompt as possible Width & height: Optimal resolution settings are noted at 1024px x 1024px Keep Proportion: Enable keep_proportion if you want to keep the same size with input and output Denoise: The amount of variance in the new image. Higher has more variance.

Image to Image Character Sheet Face Swap with Ace+

Take a character sheet and use a reference image to replace all the faces with that new person. Key Inputs Load Image: Use any JPG or PNG showing your pose sheet Load New Face: Use any JPG or PNG showing your subject clearly that you would like to swap into the pose sheet. Prompt: as descriptive a prompt as possible Width & height: Optimal resolution settings are noted at 1024px x 1024px Keep Proportion: Enable keep_proportion if you want to keep the same size with input and output Denoise: The amount of variance in the new image. Higher has more variance.

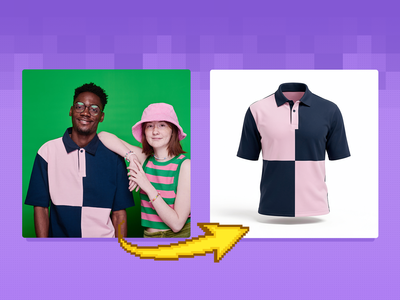

FLUX

FLUX.2 Klein

Ghost Mannequin

Image2Image

SAM3

Create a ghost mannequin clothes using flux.2 klein, SAM3 and Ghost mannequin LoRA

FLUX.2 Klein 9B + SAM3 + GhostMannequin LoRA

Create a ghost mannequin clothes using flux.2 klein, SAM3 and Ghost mannequin LoRA

floyoofficial

1.6k

character design

consistency

happy horse

image to video

reference to video

video generation

Turn up to 9 reference images plus a prompt into a 5-second video with Happy Horse 1.0. Keep characters, products, and style consistent across the shot.

Happy Horse 1.0 Reference to Video

Turn up to 9 reference images plus a prompt into a 5-second video with Happy Horse 1.0. Keep characters, products, and style consistent across the shot.

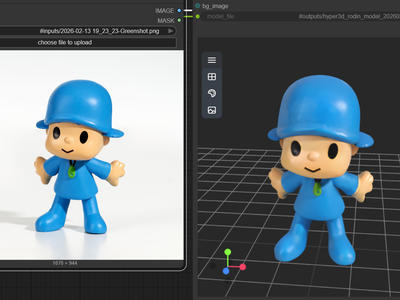

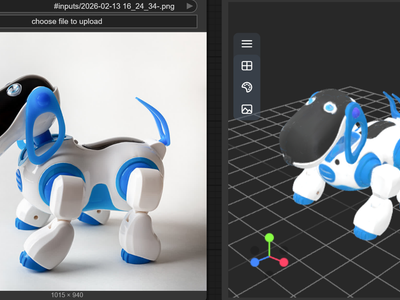

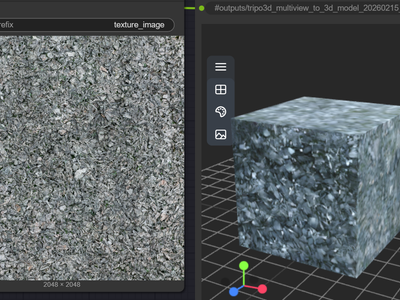

3D

3D Model

Hyper3D Rodin v2

Image to 3D

Rodin v2

Turn your images into 3D using Hyper3D Rodin v2

Hyper3D Rodin V2 for Image to 3D

Turn your images into 3D using Hyper3D Rodin v2

Wan2.6 Text to Video

Tripo3D for Image to 3D

Create 3D model using Tripo3D with v2.5

fx-integration

image-to-image

qwen

reference-image

upscaling

video-conditioning

wan21-funcontrol

Vertical Video FX Inserter - Qwen + Wan 2.1 FunControl

Chroma 1 Radiance Text to Image

Chroma 1

floyoofficial

2.5k

Flux

FLUX2 Klein

Photography

Text2Image

Create a high quality image using 9B model of Flux 2 Klein

FLUX.2 Klein 9B for Text to Image

Create a high quality image using 9B model of Flux 2 Klein

Masking

Segmentation

Video

Use a video clip and visual markers to segment/create masks of the subject or the inverse. Key Inputs Load Video: Use any Mp4 that you would like to segment or create a mask from Select subject: Use 3 green selectors to identify your subject and one red selector to identify the space outside your subject Modify markers: Shift+Click to add markers, Shift+Right Click to remove markers

Video Masking with Sam2 Comparison

Use a video clip and visual markers to segment/create masks of the subject or the inverse. Key Inputs Load Video: Use any Mp4 that you would like to segment or create a mask from Select subject: Use 3 green selectors to identify your subject and one red selector to identify the space outside your subject Modify markers: Shift+Click to add markers, Shift+Right Click to remove markers

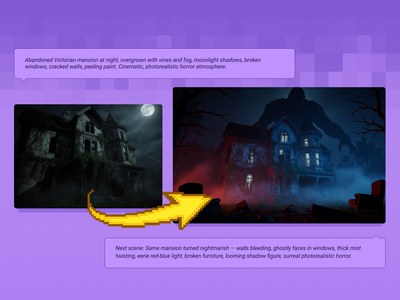

Qwen Image Edit 2509 + Flux Krea for Creating Next Scene

floyoofficial

2.4k

Flux

Flux.2 Klein

Image2Image

Inpainting

LanPaint

Inpainting image using Flux.2 Klein and LanPaint

FLUX.2 Klein 9B Image Inpainting

Inpainting image using Flux.2 Klein and LanPaint

LoRa

Text2Video

Wan2.1

Generate a high-quality video from a text prompt and add in a LoRA for extra control over character or style consistency. Key Inputs Prompt: as descriptive a prompt as possible Load LoRA: Load your reference model here Width & height: Optimal resolution settings are noted File Format: H.264 and more

Text to Video and Wan with optional LoRA

Generate a high-quality video from a text prompt and add in a LoRA for extra control over character or style consistency. Key Inputs Prompt: as descriptive a prompt as possible Load LoRA: Load your reference model here Width & height: Optimal resolution settings are noted File Format: H.264 and more

Animation

Filmography

Image2Video

LTX 2

Open Source

A workflow for ltx 2 image to video using distilled model

LTX 2 19B Fast for Image to Video

A workflow for ltx 2 image to video using distilled model

SAM3 for Video Masking using Text

Create a video masking using SAM3 and Text only.

Vertical Video FX Insterter / Element Pass with Seedream + Wan

Vertical Video Light & Mood Shift

Wan2.2 Fun Camera for Camera Control

360 Degree Product Video Using Nano Banana Pro

Image2Image

Image Edit

Qwen Image Edit 2511

Create different angle of the image using Qwen Image Edit 2511 and with special node

Camera Angle Control with QwenMultiAngle

Create different angle of the image using Qwen Image Edit 2511 and with special node

FlatLogColor LoRA and Qwen Image Edit 2509

SAM3 Image Segmentation

Wan2.2 Fun and RealismBoost LoRA for V2V

Nano Banana Pro Edit Image to Image

Light Restoration LoRA + Qwen Image Edit 2509 Image to Image

Flux

Image

Redux

Create variations of a given image, or restyle them. It can be used to refine, explore, or transform ideas and concepts. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly Width & height: In pixels Prompt: as descriptive a prompt as possible Strength (step 5: value): Strength of redux model, play around with the value to increase or decrease the amount of variation

Image Redux with Flux

Create variations of a given image, or restyle them. It can be used to refine, explore, or transform ideas and concepts. Key Inputs Image reference: Use any JPG or PNG showing your subject clearly Width & height: In pixels Prompt: as descriptive a prompt as possible Strength (step 5: value): Strength of redux model, play around with the value to increase or decrease the amount of variation

Hunyuan

LoRa

Text2Video

Integrate a custom model with your text prompt to create a video with a consistent character, style or element. Key Inputs Prompt: as descriptive a prompt as possible. Make sure to include the trigger word from your LoRA below Load LoRA: Load your reference model here Width & height: resolution settings are noted in pixels Guidance strength (CFG): Higher numbers adhere more to the prompt Flow Shift: For temporal consistency, adjust to tweak video smoothness.

Text to Video + Hunyuan LoRA

Integrate a custom model with your text prompt to create a video with a consistent character, style or element. Key Inputs Prompt: as descriptive a prompt as possible. Make sure to include the trigger word from your LoRA below Load LoRA: Load your reference model here Width & height: resolution settings are noted in pixels Guidance strength (CFG): Higher numbers adhere more to the prompt Flow Shift: For temporal consistency, adjust to tweak video smoothness.

Image to Video with Seedance Pro API

🔥Create Stunning 10 Second 3D Spin Shots in Seedance for Characters, Products, and Hero Scenes

Qwen Image Edit 2511 Lightning - Multi-Image Edit

Grok Imagine for Text to Video

Create excellent videos using Grok Imagine for T2V

Grok Imagine for Imagine Edit

Edit images using Grok Imagine

InfiniteTalk - Lip Sync Any Video to Any Audio

anime

character design

concept art

seedvr

text to image

upscaling

z-anime

Generate anime and illustration art from text with Z-Anime, then upscale to 1080p with SeedVR. Compare the base render and the upscaled version side by side.

Z-Anime - Text to Image with SeedVR Upscale

Generate anime and illustration art from text with Z-Anime, then upscale to 1080p with SeedVR. Compare the base render and the upscaled version side by side.

Flux

Image

Outpaint

Extend your images out for a wider field of view or just to see more of your subject. Expand compositions, change aspect ratios, or add creative elements while maintaining consistency in style, lighting, and detail while seamlessly blending with the existing artwork.

Flux Fill Dev Image Outpainting

Extend your images out for a wider field of view or just to see more of your subject. Expand compositions, change aspect ratios, or add creative elements while maintaining consistency in style, lighting, and detail while seamlessly blending with the existing artwork.

Multiple Angle Lighting LoRA + 2511

digital illustration

image to image

recraft

style transfer

text to image

Transform an existing image with Recraft V3. Upload a reference, write a prompt, set strength, and pick a style. Controls how much of the original survives.

Recraft V3 Image to Image - Style Transfer

Transform an existing image with Recraft V3. Upload a reference, write a prompt, set strength, and pick a style. Controls how much of the original survives.

consistency

e-commerce

image to video

product photography

seedance 2.0

video generation

Animate jewelry from product photos with Seedance 2.0. Upload a start frame and up to 6 reference angles, describe the camera move, and get a 5-second clip.

Seedance 2.0 for Jewelry Scene Animator

Animate jewelry from product photos with Seedance 2.0. Upload a start frame and up to 6 reference angles, describe the camera move, and get a 5-second clip.

Qwen Image Edit – Multi-Angle Camera View

3D

Animation

Architecture

Flux

Game Development

Hunyuan 3D

Image to 3D

Upscaling

Create a 3D model from a reference image with Flux Dev texture upscaling.

Image to 3D with Hunyuan3D w/ Texture Upscale

Create a 3D model from a reference image with Flux Dev texture upscaling.

Anima2

character design

concept art

fantasy

Text2Image

Generate images with Anima 2, a model built for anime and fantasy art. Write a prompt, set your resolution, and get stylized results in one run. Free to try.

Anima Preview 3 - Text to Image

Generate images with Anima 2, a model built for anime and fantasy art. Write a prompt, set your resolution, and get stylized results in one run. Free to try.

Z-Image Turbo + Chord Image to PBR Material

Seedance Text to Video: Create Stunning 1080p Videos Instantly

LTX 2.3 Pro Text to Video

Next-Level Motion from Images using MiniMax

Flux

Image

LoRa

Upscale

Create a larger more detailed image along with an extra AI model for fine tuned guidance. Key Inputs Load Image: Use any JPG or PNG showing your subject clearly Load LoRA: Load your reference model here Prompt: as descriptive a prompt as possible Upscale by: The factor of magnification Denoise: The amount of variance in the new image. Higher has more variance.

Image Upscaler with LoRA

Create a larger more detailed image along with an extra AI model for fine tuned guidance. Key Inputs Load Image: Use any JPG or PNG showing your subject clearly Load LoRA: Load your reference model here Prompt: as descriptive a prompt as possible Upscale by: The factor of magnification Denoise: The amount of variance in the new image. Higher has more variance.

VibeVoice Text to Speech Multi Speaker

Speech Multi Speaker

ElevenLabs Text to Speech

ElevenLabs Text to Speech

Anything2Real 2601A

Kling Master 2.0 Create Engaging Video Content

Vertical Video Background & Scene Rebuild

Ovi: Create a Talking Portrait

Studio Relighting for Composited Products

Image to Video

LTX2.3

Turn a single portrait into vertical video with LTX 2.3. The VBVR LoRA holds face identity steady and gives motion the physical weight that I2V usually loses.

LTX 2.3 Face-Consistent Image to Video with VBVR

Turn a single portrait into vertical video with LTX 2.3. The VBVR LoRA holds face identity steady and gives motion the physical weight that I2V usually loses.

API

Filmography

Fimmaking

Floyo API

Image2Video

LTX 2 Fast

Image to Video using LTX 2 Fast API

LTX 2 Fast API for Image to Video

Image to Video using LTX 2 Fast API

Create Photorealistic Packaging from Dielines

Flux LoRA Trainer

3D Print LoRA and Flux Kontext for Image to 3D

Insert Products in Ecommerce Ads - NanoBanana Pro

Wan2.1 + WanMOVE for Animating Movement using Trajectory Path

floyoofficial

1.1k

FantasyTalking

Image2Video

Lipsync

Wan2.1

Create high quality lipsync video from image inputs with Wan2.1 FantasyTalking Key Inputs Load Image: Select an image of a person with their face in clear view Load Audio: Choose audio file Frames: How many frames generated

Wan2.1 and FantasyTalking - Image2Video Lipsync

Create high quality lipsync video from image inputs with Wan2.1 FantasyTalking Key Inputs Load Image: Select an image of a person with their face in clear view Load Audio: Choose audio file Frames: How many frames generated

GPT Image 1.5

for Image Editing

Qwen3 Thinking Prompt Enhancer

Image2Video

LTX

Video

Used for image to video generation, including first frame, end frame, or other multiple key frames. Key Inputs Load Image (Start Frame): Use any JPG or PNG showing your subject clearly to start your video Load Image (End Frame): Use any JPG or PNG showing your subject clearly to act as the last part of your video. Make sure it's the same resolution as the load image. Width & height: Optimal resolution settings are noted. LTX maximum resolution is 768x512 Prompt: as descriptive a prompt as possible

Image to Video with Multiframe Control

Used for image to video generation, including first frame, end frame, or other multiple key frames. Key Inputs Load Image (Start Frame): Use any JPG or PNG showing your subject clearly to start your video Load Image (End Frame): Use any JPG or PNG showing your subject clearly to act as the last part of your video. Make sure it's the same resolution as the load image. Width & height: Optimal resolution settings are noted. LTX maximum resolution is 768x512 Prompt: as descriptive a prompt as possible

SRPO Next-Gen Text-to-Image

Vace

Video

Wan

Created by @davcha on Civitai, please support the original creator! https://civitai.com/models/1674121/simple-self-forcing-wan13bvace-workflow If this is your workflow, please contact us at team@floyo.ai to claim it! Original guide from creator: This is a very simple workflow to run Self-Forcing Wan 1.3B + Vace, it only uses a single custom node, which everyone making videos should have: Kosinkadink/ComfyUI-VideoHelperSuite. Everything else is pure comfy core. You'll need to download the model of your choice from here lym00/Wan2.1-T2V-1.3B-Self-Forcing-VACE · Hugging Face, and put it inside your /path/to/models/diffusion_models folder. This workflow can be used as a very good start for experimenting. You can refer to this [2503.07598] VACE: All-in-One Video Creation and Editing for how to use Vace. You don't need to read the paper of course, the information you are interested in is mostly at the top of page 7, which I reproduce in the following: Basically, in the WanVaceToVideo node, you have 3 optional inputs: control_video, control_masks, and reference_image. control_video and control_masks are a little bit misleading. You don't have to provide a full video. You can in fact provide a variety of things to obtain various effects. For example: if you provide a single image, it's basically more or less equivalent to image2video. if you provide a sequence of images separated by empty images: img1, black, black, black, img2, black, black, black, img3, etc... then it's equivalent to interpolating all these img, filling the blacks. A special case of this one to make it clear is if you have img1, black, black, ..., black, img2, then it's equivalent to start_img, end_img to video. control_masks control where Wan should paint. Basically if wherever the mask is 1, the original image will be kept. So you can for example pad and/or mask an input image, like this: and use that image and mask as control_video and control_mask, and you'll basically do a image2video inpaint and outpaint. If you input a video in control_video, then you can control where the changes should happen in the same way, using control_mask. You'll need to set one mask per frame in the video. if you input an image preprocessed with openpose or a depthmap, you can finely control the movement in the video output. reference_image node is basically an image that you feed to Wan+Vace that serves as a reference point. For example, if you put the image of someone's face here, there's a good chance you'll get a video with that person's face.

Simple Self-Forcing Wan1.3B+Vace workflow

Created by @davcha on Civitai, please support the original creator! https://civitai.com/models/1674121/simple-self-forcing-wan13bvace-workflow If this is your workflow, please contact us at team@floyo.ai to claim it! Original guide from creator: This is a very simple workflow to run Self-Forcing Wan 1.3B + Vace, it only uses a single custom node, which everyone making videos should have: Kosinkadink/ComfyUI-VideoHelperSuite. Everything else is pure comfy core. You'll need to download the model of your choice from here lym00/Wan2.1-T2V-1.3B-Self-Forcing-VACE · Hugging Face, and put it inside your /path/to/models/diffusion_models folder. This workflow can be used as a very good start for experimenting. You can refer to this [2503.07598] VACE: All-in-One Video Creation and Editing for how to use Vace. You don't need to read the paper of course, the information you are interested in is mostly at the top of page 7, which I reproduce in the following: Basically, in the WanVaceToVideo node, you have 3 optional inputs: control_video, control_masks, and reference_image. control_video and control_masks are a little bit misleading. You don't have to provide a full video. You can in fact provide a variety of things to obtain various effects. For example: if you provide a single image, it's basically more or less equivalent to image2video. if you provide a sequence of images separated by empty images: img1, black, black, black, img2, black, black, black, img3, etc... then it's equivalent to interpolating all these img, filling the blacks. A special case of this one to make it clear is if you have img1, black, black, ..., black, img2, then it's equivalent to start_img, end_img to video. control_masks control where Wan should paint. Basically if wherever the mask is 1, the original image will be kept. So you can for example pad and/or mask an input image, like this: and use that image and mask as control_video and control_mask, and you'll basically do a image2video inpaint and outpaint. If you input a video in control_video, then you can control where the changes should happen in the same way, using control_mask. You'll need to set one mask per frame in the video. if you input an image preprocessed with openpose or a depthmap, you can finely control the movement in the video output. reference_image node is basically an image that you feed to Wan+Vace that serves as a reference point. For example, if you put the image of someone's face here, there's a good chance you'll get a video with that person's face.

floyoofficial

1.6k

Camera Control

Image2Image

LoRA

Qwen

Qwen Image Edit 2509

Re-render your subject from any camera angle with Qwen Image Edit 2509 and a Multi-Angle LoRA. Pan, tilt, rotate, wide-angle, or close-up. No trigger word.

Qwen Image Edit 2509 + Multi-Angle LoRA for Camera

Re-render your subject from any camera angle with Qwen Image Edit 2509 and a Multi-Angle LoRA. Pan, tilt, rotate, wide-angle, or close-up. No trigger word.

Block-wise Image Upscaling with Qwen

Block-wise Image Upscaling with Qwen

animation

Ditto

lora

VACE

Video2Video

Wan

Upload any video, describe a new style, and Wan 2.1 rewrites every frame. Ditto keeps motion and structure intact across anime, Pixar, clay, and dozens more.

Wan 2.1 Vid2Vid Style Transfer with Ditto

Upload any video, describe a new style, and Wan 2.1 rewrites every frame. Ditto keeps motion and structure intact across anime, Pixar, clay, and dozens more.

3D

Chord

Game Design

PBR Material

Text to 3D

Ubisoft

Create a 3D Game Material Asset using Chord Model from Ubisoft

Chord for PBR Material Generation using Text to 3D

Create a 3D Game Material Asset using Chord Model from Ubisoft

Meshy v6 for Image to 3D Model

Create a 3D model from Image using Meshy v6

Wan2.1 and ATI for Control Video Motion: Draw Your Path, Get Your Video

Craft Stunning Edits Instantly with Nano Banana Edit

audio

Audio2Audio

Chatterbox

tts

TTS Audio Suite

voice conversion

Convert any voice to match a target speaker using ChatterBox TTS. Upload source and narrator audio, run it, get back a converted MP3. No voice training needed.

Voice Changer using TTS Audio Suite (ChatterBox)

Convert any voice to match a target speaker using ChatterBox TTS. Upload source and narrator audio, run it, get back a converted MP3. No voice training needed.

animation

character design

image to video

kling

video generation

Apply motion from a reference video to a still image with Kling 3.0 Pro.

Kling 3.0 Pro Motion Control

Apply motion from a reference video to a still image with Kling 3.0 Pro.

image to video

ltx 2

text to video

video generation

Add seconds to an existing video with LTX 2.3. Upload a clip, set the duration and mode

LTX 2.3 - Extend Video

Add seconds to an existing video with LTX 2.3. Upload a clip, set the duration and mode

API

FloyoAPI

Recraft

Text2Image

Generate images with Recraft V3 from a text prompt. Choose a preset size or custom dimensions, pick a style, and run.

Recraft V3 Text to Image

Generate images with Recraft V3 from a text prompt. Choose a preset size or custom dimensions, pick a style, and run.

API

Floyo API

Kling

MotionControl

Transfer movements from a reference video to any character image.

Kling 3.0 Standard Motion Control

Transfer movements from a reference video to any character image.

Flux

Image to image

Opensource

Style transfer

Transfer makeup from a reference photo onto a portrait using FireRed Image Edit 1.1. Upload a face and a makeup reference, and the model applies the look while keeping pose and facial features intact.

FireRed Image Edit - Makeup Transfer

Transfer makeup from a reference photo onto a portrait using FireRed Image Edit 1.1. Upload a face and a makeup reference, and the model applies the look while keeping pose and facial features intact.

LTX 2.3 Image to Video with Two-Pass Upscaling

Kling O3 Video to Video — Standard Reference

Capybara for Image Editing

Edit your cool images using Capybara

LongCat for Text to Image

Create cool images using the LongCat

LTX 2.3 Audio to Video

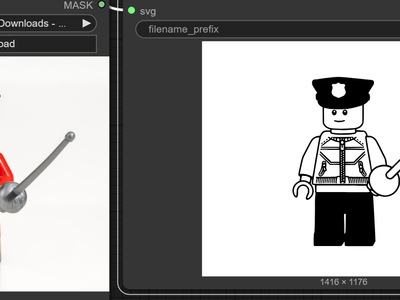

Image to SVG

Qwen Image Edit 2511

SVG

SVG Potracer

Create SVG image using Qwen Image Edit and SVG Potracer node

SVG Potracer + Qwen Image 2511 for Image to SVG

Create SVG image using Qwen Image Edit and SVG Potracer node

Audio2Audio

SoproTTS

Text to Speech

TTS

Turn your text to excellent speech using SoproTTS

Sopro for Text to Speech

Turn your text to excellent speech using SoproTTS

background removal

film production

vfx

video generation

Upload green screen footage and get a clean alpha matte plus composite preview. Corridor Key's neural network handles hair, motion blur, and transparency.

Corridor Key Green Screen Keying for Video

Upload green screen footage and get a clean alpha matte plus composite preview. Corridor Key's neural network handles hair, motion blur, and transparency.

SopranoTTS for Text to Speech

Turn speech using Soprano TTS

Nano Banana Pro - Game Art Restyling

LTX 2.0 – Prompting & Dynamic Camera Movement

AILab

Audio to Text

Speech to Text

STT

Transcribe

Create a text from speech using Whisper STT

Whisper STT

Create a text from speech using Whisper STT

ACE-Step 1.5 for Music Generation

Create stunning music using ACE Step 1.5

concept art

lora

portrait

SDA

text to image

z-image turbo

Generate images with Z-Image Turbo while the SDA diversity LoRA stops every seed from producing the same pose and composition. 8 steps, 2x upscale to 2048.

Z-Image Turbo + SDA LoRA for Diverse Text to Image

Generate images with Z-Image Turbo while the SDA diversity LoRA stops every seed from producing the same pose and composition. 8 steps, 2x upscale to 2048.

Z-Image Base - Text to Image w/ LoRA

Run Z-Image Base with a custom LoRA

Vidu Q3 for Image to Video

Turn to images to real life

Image2Image

Image Editing

Seedream 4.5

Text2Image

An all purpose Seedream 4.5 for image generation

Seedream 4.5 Unified for Image Generation

An all purpose Seedream 4.5 for image generation

Minimax Speech 2.8 HD for Text to Speech

Create realistic speech using Minimax speech 2.8

Image Edit

Qwen

Qwen Image Edit 2511

Relighting

Relighting images using Qwen multiangle light node

Qwen Multiangle Light with Qwen Image Edit 2511

Relighting images using Qwen multiangle light node

SAM3 for Video Masking using Points

Create a video masking using SAM3 and Points only.

Grok Imagine for Text to Image

Create cool images using Grok Imagine

Floyo API

Image2Video

PixVerse

You can swap object,, character and background using PixVerse

Pixverse Swap for Image to Video Swap

You can swap object,, character and background using PixVerse

animation

film production

image to video

vfx

video generation

Turn any image into video with Seedance 2.0 by ByteDance. Built-in audio generation, start and end frame control, and clips up to 10 seconds

Seedance 2.0 - Image to Video

Turn any image into video with Seedance 2.0 by ByteDance. Built-in audio generation, start and end frame control, and clips up to 10 seconds

Kandinsky for Text to Video

Creating excellent videos using Kandinsky

3D Products with Logo - Wan2.6 Image to Video

Static Watermark Remover

GPT-Image 1.5

Image2Image

Image2Video

Kling 2.6

Text2Image

VLM

Create a high quality demo for your products using Kling 2.6 Image to Video

Create Product Demo from Concept to Video

Create a high quality demo for your products using Kling 2.6 Image to Video

Seedance 2.0 Reference-to-Video

Insert Product into Existing Ad

Image2Video

Kling Omni One

Next Scene LoRA

Qwen Image Edit 2511

Reference2Video

Creating a reshoot for a character

Character Reshoot using Qwen Edit 2511 + Kling O1

Creating a reshoot for a character

Flimography

LTX 2 Pro

Open Source

Text2Video

Videography

An open source LTX 2 Pro for Text to Video

LTX 2 19B Pro for Text to Video

An open source LTX 2 Pro for Text to Video

Video Detailer using LTX 2 Vid2Vid

It can enhance the detail of the video

Camera Control

Image2Vid

Qwen Image Edit 2511

Vid2Vid

Wan2.6

Using witness cameras to recreate additional shots that were not captured by principal photography

Camera Angle Creation using Image2Vid

Using witness cameras to recreate additional shots that were not captured by principal photography

Seedance 2.0 Fast Reference-to-Video

ChatterBox

Higgs

Text to Speech

TTS

VibeVoice

A workflow of TTS Audio Suite which can to use different type of audio models.

Multi Model for Voice Convesion and Text to Speech

A workflow of TTS Audio Suite which can to use different type of audio models.

LTX 2 Retake Video for Video Editing

Create Cinematic Poster & Ad from Your Product

LTX 2 Fast API for Text to Video

Text to video using LTX 2 Fast API

Wan2.1 + SCAIL for Animating Images for Movement

Qwen Image - Text to 360° HDRI Panorama

Change Product Shots with NanoBanana Pro

Vertical Video Prop & Object Replacement Using Seedream + Wan 2.2

flux

flux 2 klein

image to image

lora

outpainting

panorama

Turn any image into a full 360 equirectangular panorama with Klein 9B and a 360 ERP outpaint LoRA. Cut flat camera shots at any angle from the result.

Flux 2 Klein 9B + 360 Panorama ERP LoRA

Turn any image into a full 360 equirectangular panorama with Klein 9B and a 360 ERP outpaint LoRA. Cut flat camera shots at any angle from the result.

flux

flux 2 klein

image to image

inpainting

outpainting

panorama

Edit 360 panoramas with Flux 2 Klein 9B. Select a region, describe the change, and the edit gets composited back into your full panoramic image. No warping.

Flux 2 Klein 9B Panorama Inpainting

Edit 360 panoramas with Flux 2 Klein 9B. Select a region, describe the change, and the edit gets composited back into your full panoramic image. No warping.

Veo 3.1 Image to Video

Kling Omni 1 Reference to Video

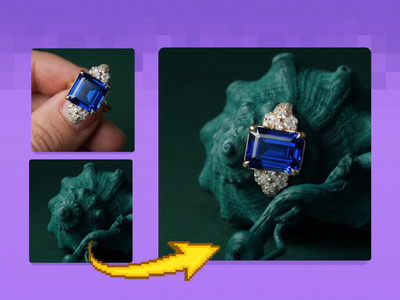

e-commerce

image to image

nano banana 2

product photography

Drop a ring photo and a background into the same workflow. Nano Banana 2 composites the jewelry into the scene seven ways so you can cherry-pick the best take.

Nano Banana 2 for Jewelry Scene Compositor

Drop a ring photo and a background into the same workflow. Nano Banana 2 composites the jewelry into the scene seven ways so you can cherry-pick the best take.

Kling 2.5 Image to Video

Qwen Image Edit 2509 and Grayscale to Color LoRA

Vertical Video Scene Extension & Coverage Generator using Seedream +Wan

Vertical Video Scene Extension & Coverage Generator

API

Floyo API

Topaz

Video2Video

Video Upscale

Upload a video, pick your enhancement model and quality level, and Topaz Video AI sharpens, denoises, and upscales it. Audio is preserved. Output is H265 MP4.

Topaz Video Upscaler for Sharper Results

Upload a video, pick your enhancement model and quality level, and Topaz Video AI sharpens, denoises, and upscales it. Audio is preserved. Output is H265 MP4.

Kling 3.0 for Video Generation

Coming soon page for Kling 3.0

Realistic Product or Props Replacement

animation

concept art

film production

image to video

pixverse

pixverse c1

Animate a reference image into cinematic video with PixVerse C1. Pick your duration up to 15 seconds, resolution up to 1080p, and optional native audio.

PixVerse C1 - Image to Video

Animate a reference image into cinematic video with PixVerse C1. Pick your duration up to 15 seconds, resolution up to 1080p, and optional native audio.

MiniMax Text-to-Video will Bring Your Creative Concepts to Life with Realistic Motion

Boost Your Creative Video: Comprehensive Solutions with Seedance Image to Video

Film Grain + Simple Upscale

Modify the Image using InstantX Union ControlNet

flux

flux 2 klein

image to image

style transfer

Edit images with Flux 2 Klein 9B in 4 steps. KV Cache speeds every run by reusing attention work across steps. Upload an image, describe the edit, hit Run.

Flux 2 Klein 9B + KV Cache for Image Editing

Edit images with Flux 2 Klein 9B in 4 steps. KV Cache speeds every run by reusing attention work across steps. Upload an image, describe the edit, hit Run.

image to text

llm

open source

qwen

text generation

vlm

Run Qwen 3.5 9B in ComfyUI as a text-only LLM or as a vision language model. Attach an image or a video, write your prompt, and get text back.

Qwen 3.5 9B for Open Source LLM and VLM

Run Qwen 3.5 9B in ComfyUI as a text-only LLM or as a vision language model. Attach an image or a video, write your prompt, and get text back.

gpt-image-2

image-generation

openai

t2i

text-to-image

Generate stunning, highly detailed images from just a text prompt using GPT Image 2.

GPT Image 2: Text to Image

Generate stunning, highly detailed images from just a text prompt using GPT Image 2.

e-commerce

gpt image 2

image to image

inpainting

product photography

Edit images with OpenAI's GPT Image 2. Upload one or two images, write what you want changed, and the model rewrites the scene while keeping details intact.

GPT Image 2: Image Editing

Edit images with OpenAI's GPT Image 2. Upload one or two images, write what you want changed, and the model rewrites the scene while keeping details intact.

API

Bedrock

Nova Canvas

SDXL

Text to Image

Titan

Generate and compare images between 3 different models powered by Amazon Bedrock. Key Inputs Prompt: as descriptive a prompt as possible Models SDXL: Solid all-around performer with strong prompt adherence and wide style range Titan: Versatile model with built-in editing features and customization flexibility Nova Canvas: Quick iterations with creative flair, ideal for brainstorming and concept exploration

Amazon Bedrock - Text to Multi-Image with SDXL, Titan and Nova Canvas

Generate and compare images between 3 different models powered by Amazon Bedrock. Key Inputs Prompt: as descriptive a prompt as possible Models SDXL: Solid all-around performer with strong prompt adherence and wide style range Titan: Versatile model with built-in editing features and customization flexibility Nova Canvas: Quick iterations with creative flair, ideal for brainstorming and concept exploration

Graphic Design Recomposer - Reframe Ads

Image to Talking Video - LTX 2.3 + ElevenLabs UGC

consistency

film production

happy horse

image to video

product photography

video generation

Animate a still image with Happy Horse 1.0. Upload a frame, describe the motion you want, get a 5-second clip with stable physics and consistent details.

Happy Horse 1.0 - Image to Video

Animate a still image with Happy Horse 1.0. Upload a frame, describe the motion you want, get a 5-second clip with stable physics and consistent details.

concept art

e-commerce

image to image

nano banana 2

product photography

style transfer

Upload three reference images and Nano Banana 2 generates a new jewelry environment that matches their color, lighting, and visual style. Mood board to scene.

Nano Banana 2 for Jewelry Environment Creator

Upload three reference images and Nano Banana 2 generates a new jewelry environment that matches their color, lighting, and visual style. Mood board to scene.

Alibaba

Audio

Text to Video

Wan 2.7

Generate video from a text prompt using Alibaba's Wan 2.7 model. Set your resolution, aspect ratio, and duration, then hit Run. Audio input supported.

Wan 2.7 - Text to Video

Generate video from a text prompt using Alibaba's Wan 2.7 model. Set your resolution, aspect ratio, and duration, then hit Run. Audio input supported.

bitdance

T2V

text to image

Generate photorealistic images from text prompts using BitDance 14B, a 14-billion parameter autoregressive model that predicts up to 64 visual tokens per step.

BitDance 14B - Text to Image

Generate photorealistic images from text prompts using BitDance 14B, a 14-billion parameter autoregressive model that predicts up to 64 visual tokens per step.

Captioning

LLM

Prompt Generator

Qwen3VL

VLM

Upload an image or video and get a detailed text description from Qwen3-VL. Choose your model size, pick a preset prompt, or write your own. Runs in your browser.

Qwen3-VL Image and Video Captioning

Upload an image or video and get a detailed text description from Qwen3-VL. Choose your model size, pick a preset prompt, or write your own. Runs in your browser.

audio

speech to text

srt

STT

subtitles

transcription

whisper

Upload any audio file and Whisper transcribes it into text with word-level and segment-level SRT subtitle files. Auto language detection included.

Whisper Speech-to-Text and SRT Subtitle Generator

Upload any audio file and Whisper transcribes it into text with word-level and segment-level SRT subtitle files. Auto language detection included.

subtitling

vid2vid

video generation

Upload a video and get it back with burned-in subtitles. Whisper transcribes the audio, then the text gets placed frame-by-frame with word-level timing.

Auto Subtitles with Whisper - Video to Video

Upload a video and get it back with burned-in subtitles. Whisper transcribes the audio, then the text gets placed frame-by-frame with word-level timing.

Z-Image Turbo Inpainting

Z-Image Turbo Inpainting

Grok

image-to-image

multi-style

prompt-based editing

Multi-Style Image Transformation Workflow (One Input → Multiple Outputs)

Multi-Style Image Transformation Workflow

Multi-Style Image Transformation Workflow (One Input → Multiple Outputs)

API

Image2Image

Image Editing

Qwen Image Max Edit

Editing images using the flagship model of Qwen Image Max Edit

Qwen Image Max Edit for Editing Images

Editing images using the flagship model of Qwen Image Max Edit

consistency

film production

happy horse

style transfer

vid2vid

video generation

Edit any video with Happy Horse 1.0 by uploading up to 5 reference images. Swap backgrounds, change subjects, or shift style. Original motion stays intact.

Happy Horse 1.0 Video Editing

Edit any video with Happy Horse 1.0 by uploading up to 5 reference images. Swap backgrounds, change subjects, or shift style. Original motion stays intact.

audio

audio encryption

image to image

spectrogram

Turn any image into audio by encoding it as a spectrogram. Upload a picture, set your frequency range, and get an MP3 that shows your image when visualized.

Audio to Spectogram using Orion4D Secret

Turn any image into audio by encoding it as a spectrogram. Upload a picture, set your frequency range, and get an MP3 that shows your image when visualized.

audio

audio watermarking

content tracking

provenance

steganography

Upload any audio file, write the text to hide, and get back two watermarked copies. Steganography packs more data. Frequency encoding survives MP3 compression.

Audio Watermarking using Orion 4D Secret

Upload any audio file, write the text to hide, and get back two watermarked copies. Steganography packs more data. Frequency encoding survives MP3 compression.

audio transcription

qwen

speech to text

SRT

subtitle generation

Transcribe any audio file to text and timed SRT subtitles using Qwen3's speech recognition engine. Upload your audio, get broadcast-ready captions back.

Qwen3 ASR via TTS Audio Suite for SRT Builder

Transcribe any audio file to text and timed SRT subtitles using Qwen3's speech recognition engine. Upload your audio, get broadcast-ready captions back.

Cipher

Text

Encode plain text into cipher characters and decode it back. Paste a message, pick a cipher style, and get a disguised version only the matching decoder reads.

Text Manipulator

Encode plain text into cipher characters and decode it back. Paste a message, pick a cipher style, and get a disguised version only the matching decoder reads.

Kling O3 Pro Image to Video with Reference

Audio2Audio

Audio Editing

Step Audio EditX

Voice Cloning

Upload a voice sample, transcribe it automatically with Whisper, then use Step-Audio EditX to clone that voice speaking your custom script. No trigger word needed.

Step Audio EditX for Voice Cloning

Upload a voice sample, transcribe it automatically with Whisper, then use Step-Audio EditX to clone that voice speaking your custom script. No trigger word needed.

SAM2

Segment Anything 2

video2video

Video Mask

Create a video mark frame by frame using Segment Anything 2

Segment Anything 2 for Creating Video Mask

Create a video mark frame by frame using Segment Anything 2

Kling 3.0 Pro for Text to Video

Create videos using Kling 3.0

ASR

Audio to Text

qwen

STT

Upload an audio file and Qwen3 ASR 1.7B transcribes it to text. Supports 52 languages, auto-detects the language, and handles noisy audio. No setup needed.

Qwen3 ASR 1.7B - Speech to Text

Upload an audio file and Qwen3 ASR 1.7B transcribes it to text. Supports 52 languages, auto-detects the language, and handles noisy audio. No setup needed.

Audio2Audio

Step Audio EditX

Voice Editing

Edit existing voice recordings with Step-Audio EditX. Change emotion, dialect, or style. Whisper transcribes your audio so you describe the edit, not the source.

Step Audio EditX for Voice Editing

Edit existing voice recordings with Step-Audio EditX. Change emotion, dialect, or style. Whisper transcribes your audio so you describe the edit, not the source.

ComfySketch for Creating Images

Draw cool images using comfysketch

Vidu Q3 for Text to Video

Create good videos with Vidu Q3

Fish Speech Voice Cloning TTS with Emotion Tags

Emotion Tags

Meshy v6 Text to 3D Model

Create a 3D using Meshy v6 text to model

instrumental

minimax music 2.6

music generation

song generation

soundtrack

text to music

Text-to-music with Minimax Music 2.6. Generate songs with vocals and backing from a style prompt and lyrics, or toggle instrumental mode for score only.

Minimax Music 2.6 - Text to Music

Text-to-music with Minimax Music 2.6. Generate songs with vocals and backing from a style prompt and lyrics, or toggle instrumental mode for score only.

Image Transformation using Anime2Reality LoRA

Kling 3.0 Standard for Text to Video

Create videos using Kling 3.0 Standard

Kling 3.0 Standard for Image to Video

Animate the images using Kling 3.0 Standard

Krea Wan 14B Video to Video

floyoofficial

1.0k

Image Editing

Qwen

Qwen Image Edit 2511

VNCCS Utils

Create different position of person using VNCCS custom node and Qwen Image Edit 2511

Qwen Image Edit 2511 and VNCCS Utils - Visual Pose

Create different position of person using VNCCS custom node and Qwen Image Edit 2511

film production

pixverse

pixverse c1

text to video

vfx

video generation

Generate cinematic video from text with PixVerse C1. Up to 1080p, up to 15 seconds, with optional native audio synchronized in the same generation pass.

PixVerse C1 - Text to Video

Generate cinematic video from text with PixVerse C1. Up to 1080p, up to 15 seconds, with optional native audio synchronized in the same generation pass.

Wan 2.2 T2V Workflow with UnifiedReward Flex LoRA

Wan 2.2 T2V Workflow with UnifiedReward Flex LoRA

Audio Separation

Video to Audio

Upload a video, strip the audio, and split it into four clean stems (Bass, Drums, Other, and Vocals), then save your chosen stem as an MP3. No model required.

Audio Separation for Video to Audio

Upload a video, strip the audio, and split it into four clean stems (Bass, Drums, Other, and Vocals), then save your chosen stem as an MP3. No model required.

Single Image to Multiple Consistent Shots

animation

film production

image to video

video generation

wan

Turn any still image into a short 6-second video clip with Alibaba's Wan 2.7 model. Upload your photo, describe the motion you want, and run. 1080P output.

Wan 2.7 Image to Video

Turn any still image into a short 6-second video clip with Alibaba's Wan 2.7 model. Upload your photo, describe the motion you want, and run. 1080P output.

Flux. 2 Klein INPAINT Segment Edit Accurate Image

image-to-image

Lipsync

reference-image

seedream

upscaling

Video-conditioning

wan2.1_funControl

Vertical Video Lighting & Mood Shift Using Seedream + Wan

FLUX.2 Klein 9B + Virtual Tryon LoRA

Try a clothes using Flux.2 Klein 9B and tryon LoRA from

concept art

e-commerce

image to image

portrait

style transfer

text to image

wan

Upload an image, describe what you want changed, and Wan 2.7 Pro rewrites it. Style transfers, scene edits, and generation with thinking mode built in.

Wan 2.7 Pro Unified Image Editing

Upload an image, describe what you want changed, and Wan 2.7 Pro rewrites it. Style transfers, scene edits, and generation with thinking mode built in.

image to video

ltx 2

retake

vid2vid

video generation

Re-generate a specific segment of an existing video with LTX 2.3.

LTX 2.3 - Retake Video

Re-generate a specific segment of an existing video with LTX 2.3.

character design

consistency

film production

image to video

pixverse