Camera Angle Control with QwenMultiAngle

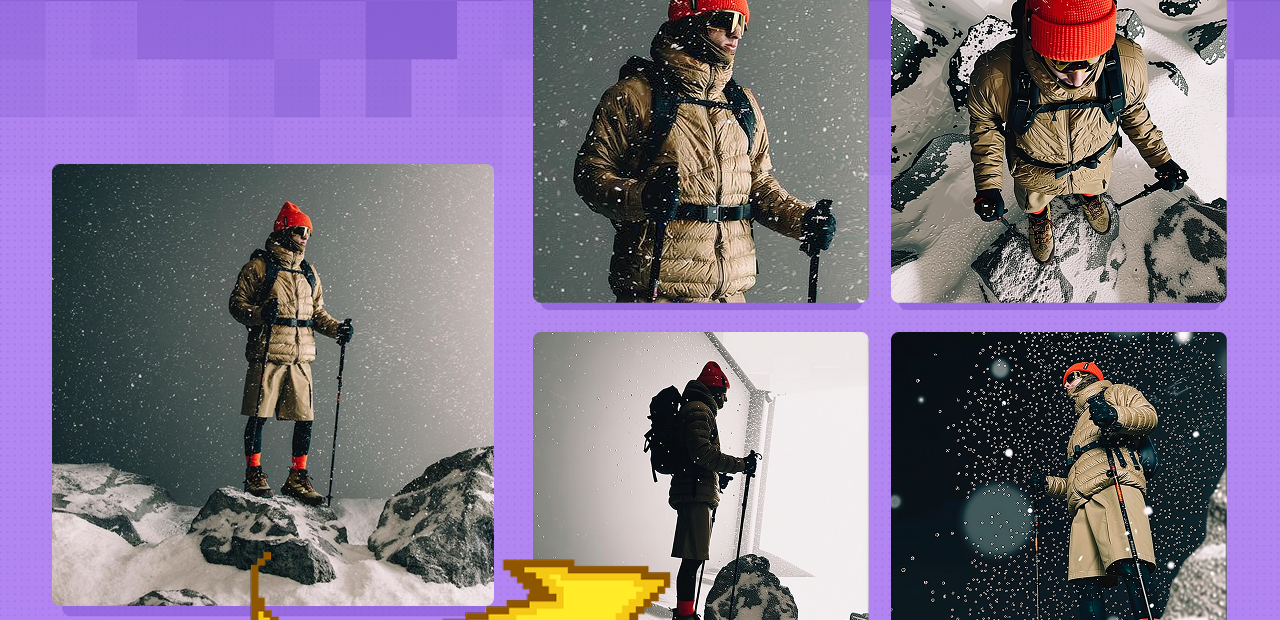

Create different angle of the image using Qwen Image Edit 2511 and with special node

Image2Image

Image Edit

Qwen Image Edit 2511

3

531

Nodes & Models

WorkflowGraphics

UNETLoader

qwen_image_edit_2511_bf16.safetensors

LoadImage

LoraLoaderModelOnly

Qwen-Image-Edit-2511-Lightning-4steps-V1.0-bf16.safetensors

qwen-image-edit-2511-multiple-angles-lora.safetensors

TextEncodeQwenImageEditPlus

ModelSamplingAuraFlow

CFGNorm

KSampler

VAEDecode

PreviewImage

easy promptList

TextEncodeQwenImageEditPlusPro_lrzjason

This setup is about turning camera control into a visual, slider‑based task: you pick angles in a 3D widget, and Qwen‑based image editing handles the heavy lifting of re‑rendering the scene from that viewpoint.

Overview

The custom node acts as a 3D camera rig inside ComfyUI, outputting descriptive angle text like “front‑right quarter view, low‑angle shot, close‑up,” which plugs straight into the image‑edit model’s viewpoint controls. That model, together with its multi‑angle LoRA, then generates consistent alternate perspectives of the same character or scene, effectively giving you a virtual camera orbit, tilts, and shot‑size changes.

Why use it

Replaces brittle manual angle prompting (“3/4 view, low angle, medium shot…”) with precise, repeatable camera presets and sliders.

Produces multi‑angle coverage (front, sides, back, low/high angles, close/medium/wide) while preserving identity and environment, which is ideal for storyboards and shot planning.

Aligns AI image work with classic cinematography concepts—azimuth, elevation, shot size—so creative decisions map cleanly to what you see in the viewport.

Use cases

Designing full angle packs of a character (96 combinations of view direction × height angle × shot size) for animation, comics, or 3D reference.

Building storyboard sequences where each panel is the same scene from different camera positions (establishing wide → medium → close‑up → over‑the‑shoulder).

Preparing consistent reference frames for downstream video models or I2V pipelines that need coherent multi‑angle inputs.

Read more